Eight years ago, Samueli, then a professor at the University of California, Los Angeles (UCLA), who had been pushing the state of the art of digital broadband communications for more than a decade, joined with his Ph.D. student Henry Nicholas to found Broadcom, now in Irvine, Calif. Their first project was to design the world’s first chips for digital interactive television.

Today Samueli holds patents for DSP-based receiver architectures for a number of digital communications transceivers, including ones for cable television, satellite television, Ethernet, and high-bit-rate digital subscriber line services. Plus Broadcom now makes more than 95 percent of the chips that go into U.S. digital cable set-top boxes and cable modems. Such modems are viewed as the foundation for the future of data, voice, and video services to the home.

Broadcom also has big chunks of the markets for chips for Ethernet transceivers, high-definition television (HDTV) receivers, digital subscriber line modems (the leading alternative to cable modems), and direct broadcast satellite receivers.

How a DIY radio kit launched Henry Samueli’s career

Samueli’s path toward becoming one of today’s key players in digital communications started 33 years ago, when he was a seventh grader. Required to take a shop class at his West Hollywood, Calif., junior high school, he selected electric shop. During the term, each student was expected to build a crystal radio from a kit, using a single crystal and an antenna wound on a toilet paper tube. Bored with the prospect, Samueli asked his teacher if, instead, he could build a five-tube short-wave radio he had read about in a Heathkit catalog. [Editor’s note: Samueli later determined that the kit was a Graymark 506B.]

Initially, the teacher said no—the short-wave radio was a ninth grade project—but Samueli persisted and eventually prevailed. It wasn’t easy, even though it was a cookbook project. Samueli had never done anything like it, and he recalls slaving away on it every

night all term. Finally, he brought the assembled kit to school, the teacher plugged it in, and it worked. “The teacher’s jaw hit the floor,” Samueli said. “He said nobody gets it right the first time.” The teacher predicted that Samueli would be a successful electrical engineer someday. It was the first time Samueli had heard of such a profession.

The radio project had fascinated him. Though he had managed to put it together, he had no idea how it worked. “That became my mission in life, from seventh grade onward, to find out how radios work,” he told IEEE Spectrum. It took him nine years of college, a Ph.D. thesis—a highly theoretical paper entitled “Nonperiodic forced overflow oscillations in digital filters”—and a few years in industry before he felt he had satisfied that goal.

In pursuit of this understanding, Samueli applied to UCLA, which had a good engineering department. It was also affordable because he could live with his parents. (His parents, Holocaust survivors from Krakow, Poland, who operated a series of small businesses in Los Angeles, were committed to supporting his education but couldn’t afford to send him away to school.) After he received his master’s degree at UCLA, he went straight through to a Ph.D. program, turning down a job offer from the then Bell Telephone Laboratories, in Murray Hill, N.J.

The defense industry beckons

With the completion of his doctoral thesis, Samueli joined a friend as a member of the technical staff at TRW, in Redondo Beach, Calif.

“In the late ’70s and early ’80s, the defense industry was at its peak,” he recalled. “All the top students at the local colleges went into defense. Hughes and TRW were the top two—you almost didn’t consider any other company.”

At TRW, Samueli was initially assigned to a communications systems group that was analyzing the wartime survivability of U.S. communications networks. A year later, he was moved into a design group that was developing circuit boards for military satellite and radio communications systems.

His first assignment in that position was challenging. “I had to design a communications processor box,” he recalled. This box was part of a transmitter/receiver for a digital link in a NASA ground station. It was one of the first applications of DSP technology to a satellite communications system.

“Since in those days each chip contained very few functions (like a four-bit adder or a quad flip-flop), you had to connect up hundreds or thousands of such digital logic chips to actually build a reasonable system,” Samueli said. “It was overwhelmingly complex, this fairly large box of hardware with about 1200 logic chips and several LSI [large-scale integration] multiplier chips that I had to get working all by myself, with only a technician to help. They effectively threw me into the ocean and told me to sink or swim.”

“I found out later,” he said, “that my boss didn’t think I could do it. He had given me the assignment as a test, thinking that I would eventually yell for help.” Samueli had been given four months to complete the task; he did it in two and a half.

“I’m Mr. Nice Guy. I’m not confrontational. So I get very frustrated when something goes wrong because I don’t like to yell at people.”—Henry Samueli

After that, he was given his pick of any project in the department. He chose a contract to design a high-speed digital radio modem for the U.S. Army—a project that set him on the path that eventually led to the founding of Broadcom. This was a 26-Mb/s microwave digital radio, which, being built with digital circuits, pushed the limits of technology at a time when typical digital radios were designed around analog circuits. Succeeding required designing the fastest digital adaptive equalizer—a circuit that corrects for distortions—ever built.

Peter McAdam, director of advanced technology for TRW’s electronics and technology division, was several management layers above Samueli at the time, but he recalls this project.

“We were designing digital radios,” McAdam told Spectrum,” and he was doing digital signal demodulators for them. He implemented them using digital phase-lock-loop technology before the rest of the world had thought of doing such a thing. We didn’t have to do that part of it digitally, but he pushed it—he insisted we could do it, and got us all inventing algorithms to do so.”

The lure of academia

Since joining TRW in 1980, Samueli had been simultaneously teaching college engineering courses in his spare time—first at California State University, Northridge, and then at UCLA. In 1985 UCLA offered him a full-time position.

Samueli jumped at the chance. “Not that I didn’t like TRW. To this day I think it was one of the best jobs I could have had. Working in the defense industry, you are given all the money and resources you need in order to develop the greatest, state-of-the-art technology. But the opportunity to be a professor at one of the top universities in the world was too good to pass up.”

The best part of academia, Samueli thinks, is working with students. “They are so energetic and hardworking and motivated to learn,” he said. “It is a thrilling environment.”

“Coming from a Jewish family,” he mused, “the big push was to become a medical doctor. But working in a hospital around sick people all day versus working at a university where you have all these bright eager young minds—there is just no comparison.”

The other bonus of the university environment is academic freedom. “You pick a subject and go for it. You have to raise the money, but nobody tells you what to do.”

Nicolaos G. Alexopoulos, now dean of engineering at the University of California, Irvine, was the chair of UCLA’s electrical engineering department during Samueli’s tenure. He recalled that Samueli was good at getting corporate research grants and donations.

“I had created a corporate affiliates program for the department,” Alexopoulos said, “and Henry must have raised several million dollars in equipment donations and affiliate memberships. He was successful because the corporations related to his work, respected his research, and could tell he had genuine interest in helping the department, not just himself.”

At UCLA, Samueli launched a research program in applying IC technology to high-speed digital communications, building on the digital modem project he had completed at TRW. The first Ph.D. student to join his group was Henry Nicholas, a chip designer from TRW who was working on his doctorate part time. Nicholas’s chip design background complemented Samueli’s systems architecture background, and he became a partner in building the research group, which, at its peak, had 15 graduate students.

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Nicholas complemented Samueli in another way, as the partnership continued, with the later founding of Broadcom.

“The two are good cop/bad cop,” McAdam told Spectrum. “Henry [Samueli] is really mild, really nice. In a competitive environment he can be too nice. But Nick [Henry Nicholas] takes care of that, thank you very much.”

Others who have worked with the two of them agree. And Samueli himself sees Nicholas as the ideal balance to his laid-back personality. “I’m Mr. Nice Guy,” he told Spectrum. “I’m not confrontational. So I get very frustrated when something goes wrong because I don’t like to yell at people.”

“Nick, on the other hand,” he said, “is never shy about yelling. And you need somebody like that to run a successful corporation. It has turned out to be a tremendous partnership; we are complementary in every respect.”

Henry Samueli’s first start-up

In 1988, with his UCLA research program in full swing, pushing digital communications chips to higher and higher speeds, Samueli got a phone call from two of his former TRW co-workers.

They were starting a company, PairGain Technologies, in Tustin, Calif., to build digital subscriber line (DSL) transceivers, and they needed a chief architect. Their initial product operated at integrated-services digital network (ISDN) speeds (128 kb/s), which were standard at the time. But the company then made a technological leap by developing a high-bit-rate DSL (HDSL) transceiver that operated at 1.5Mb/s over ordinary phone lines.

Ben Itri, now chief technology officer of PairGain, was behind the effort to recruit Samueli. “We needed someone who could give us credibility in a theoretical area,” Itri said. “What we were proposing had adaptive digital filters, and Henry had done a lot of work in that area.” (Adaptive digital filters correct for the distortion that occurs when a broadband digital signal is sent over the telephone network, which is optimized for analog voice communications.)

“He also gave us access to a pool of talented people at UCLA,” Itri told Spectrum. “After he was on board, we pitched the company to venture capitalists. They respected his background. Without him, it would have been very difficult.”

While the PairGain job was of interest to Samueli, he was not ready to leave UCLA, so he signed on as a one-day-a-week PairGain consultant. He immediately brought Nicholas on board, who added a PairGain post to his already busy schedule of TRW work and Ph.D. research at UCLA. Samueli worked on the architecture, Nicholas launched a chip design group, and the company’s first product, the pioneering HDSL transceiver, was introduced in 1991. PairGain subsequently achieved about an 80 percent market share for HDSL transmission equipment—the boxes that allow the installation of high-speed digital connections between businesses and their local phone companies.

“I got stock options to join PairGain,” Samueli said. “I had no idea what that meant at the time, but, boy, did I learn quick.” PairGain went public in 1993, and Samueli’s stock subsequently became worth several million dollars.

How Broadcom got its start

Meanwhile, Samueli’s research group at UCLA was designing all sorts of digital communications chips, using novel algorithms to implement things like QAM (quadrature amplitude modulation) modems and equalizers that had never before been done digitally. Next he proposed developing ICs for an all-digital modem that would operate at several hundred megabits per second, which was far beyond existing digital modem speeds. Samueli published his results in over 100 papers and spoke at numerous conferences, and many companies were interested in applying this work to real products.

“People were calling us up and saying, ‘That was a really interesting chip design you published. Have you considered commercializing it?’ ” Samueli said. In 1991 he decided to try. He and Nicholas incorporated Broadcom, set up the company in Nicholas’s spare bedroom, and signed development contracts with Scientific Atlanta, Intel, TRW, and the U.S. Air Force. Samueli kept his UCLA post and his PairGain consulting job, hiring his graduate students as consultants to implement much of the initial work at Broadcom.

“I had three business cards: UCLA professor, chief scientist of PairGain, and vice president of research and development of Broadcom.” (Nicholas, who may have had better business and negotiating skills, became Broadcom’s president and chief executive officer; the two are co-chairmen of the company.)

The contract for Scientific Atlanta, of Norcross, Ga., clearly pushed the state of the art. New York City-based Time Warner was preparing to deploy an ambitious test of interactive digital television services in Orlando, Fla., and Scientific Atlanta had contracted with the company to build the world’s first digital cable set-top box. (Existing cable set-top boxes were analog.) What was needed was a chip-based modem to serve as the cable signal receiver for that digital box.

Broadcom completed the modem in 1994 in three chips, at a time when other digital modems filled many circuit boards. Samueli got a patent for the work on the all-digital cable receiver architecture. Using Broadcom’s design, Scientific Atlanta built 2,000 cable boxes for the Orlando field trial. While the trial was a technical success, it was a marketing failure. Time Warner quietly pulled the plug on the project, and nobody talked about interactive TV for several years. Only now is the ubiquity of the World Wide Web making interactive TV a marketable product.

In retrospect, the Time Warner test appears to have been about five years too early. Today, Internet TV products that merge TV viewing with Web access perform many of the functions envisioned by Time Warner years ago.

Broadcom’s contract with Intel Corp., of Santa Clara, Calif., was for a chip implementing a 100-Mb/s Ethernet transceiver for a local-area network (LAN), using DSP techniques. (Available Ethernet chips at the time had a top speed of 10 Mb/s.) The chip, which shipped in 1995, became the first DSP-based transceiver for LANs. The company recently announced a 1-Gb/s Ethernet chip based on similar DSP technology.

For TRW, Broadcom designed a digital frequency synthesizer chip for a military satellite application. Under the Air Force contract, Broadcom’s staff developed an anti-jam filter chip for a Global Positioning System satellite receiver.

The three-chip digital modem for Scientific Atlanta got Broadcom into the cable TV business. The Ethernet chip for Intel got the company into the LAN business. Those are the company’s largest markets today. Later, related contracts drove the company into new markets. For example, one for DSL transceivers based on Broadcom’s QAM cable modem architecture, designed for Nortel Networks, of Brampton, Ont., Canada, was Broadcom’s entry into the DSL chip market. Another venture, a development partnership with Sony Corp., Tokyo, subsequently brought the company into the HDTV receiver IC business.

But Broadcom did not restrict itself to handling development contracts alone for long. The modem chips it had developed for Scientific Atlanta brought other customers knocking on its door. So in 1994, the then 15-person company (14 engineers and an office manager) added a vice president of marketing and put together its first product line, soon establishing itself as the leader in the cable modem chip industry.

At the time, cable modems were emerging as a broadband Internet access platform for the home market. Their downstream speeds, which today are several megabits per second, offer the fastest Internet access compared with 56-kb/s modems and DSL links. Upstream speeds, though slower, are also faster than competitors. Cable operators can also provide conventional telephone service over the modems as well.

“We want to be the Intel of communications.”—Henry Samueli in 1994

Crucial to Broadcom’s chip designs was the need to sort out the signals being sent to subscribers from the cable operator’s headend. Unlike the dedicated lines in the point-to-point links used by phone modems, cable modems share a line to the headend in a point-to-multipoint configuration. A continuous bit stream is broadcast to all subscribers, with each assigned a unique address. Time-division multiple access (TDMA) is used to allocate the single address to which it is sent. The upstream uses a TDMA protocol whereby users send requests to transmit data to the headend and are then assigned specific time slots in which to send the data in short bursts.

The challenge of a single-chip cable modem design, according to Samueli, is coping with its high degree of complexity. The device incorporates a high-speed receiver and transmitter, both with precision analog front ends, as well as a complex media access control protocol engine. Successful execution requires a team with a broad range of expertise, including algorithm and protocol experts, DSP architects, application-specific IC (ASIC) engineers, and full custom and mixed-signal circuit designers.

Broadcom also became instrumental in writing the DOCSIS (Data-Over-Cable Service Interface Specification) standard for cable modems, cooperating with General Instrument and LANcity, under the auspices of Cable Television Laboratories (CableLabs), the cable industry’s research arm in Louisville, Colo.

Approved in 1998, DOCSIS is now the industry standard for all cable modems being built for the U.S. market, and was recently adopted by the International Telecommunication Union as an international cable modem standard. This market is poised for rapid growth as cable modems become readily available through computer retailers so customers can easily plug them into a cable line, rather than rent the devices from their cable service providers. Data can be transmitted at a rate of 43 Mb/s downstream and 10 Mb/s upstream using TDMA.

Even though Broadcom was being run with a small staff, Nicholas and Samueli were thinking big fairly early on. Steve Tsubota, now director of Broadcom’s cable TV business unit, interviewed for a job with Samueli in 1994. Throughout the discussion, he recalled, Samueli was low key and modest. Then Tsubota asked him where he saw Broadcom going in the future. Samueli, with his 20-person company crammed into offices shared with a law firm, answered, “We want to be the Intel of communications.”

Managing millionaires

Four years later, on 17 April 1998, the then 350-employee company went public, making nearly two-thirds of its employees paper millionaires. (Because Samueli and Nicholas did not seek venture capital investment for Broadcom, they were each able to retain over 20 percent of the company for themselves and still be generous with stock options.)

Broadcom’s stock price has appreciated by more than a factor of 10 since its initial public offering. Samueli is now a billionaire three times over, running an R&D organization with some 400 engineers, more than 50 of whom are Ph.D.s. The company as a whole now has about 700 employees, and Samueli oversees Broadcom’s research laboratories in Irvine, San Jose, and San Diego, Calif.; Atlanta, Ga.; Phoenix, Ariz.; the Netherlands; Singapore; and Bangalore, India.

Samueli claims he is not a start-up junkie; Broadcom will probably be his last start-up venture: “I can’t see myself going through that punishment all over again. So many factors of success are out of your control. I don’t believe I could create another Broadcom again, so I wouldn’t even want to try.”

“I don’t think my family would put up with it, either,” he added. “Eighty-hour workweeks are very stressful on family life. I think I have the most understanding and tolerant wife in the world. There isn’t anything I wouldn’t do for her, given all that she has done for me, and her No. 1 request is for me to spend more time at home.”

The money hasn’t changed him much, colleagues say. His one splurge was to buy a house on the ocean (his wife’s life-long dream). He has also greatly increased his philanthropy, with a focus on university research and on science and math education for students from kindergarten through 12th grade.

“Education is the key to prosperity,” Samueli said. “I hope that by investing back into our educational infrastructure, I can plant the seeds for the next Broadcom.”

He still behaves like a college professor. “I have never given up my professor’s hat,” he told Spectrum. “I love to give lectures, I love to talk to people and teach them things.” He brags about the technical successes of the engineers on his staff and of the papers they presented at recent conferences.

Not an academic alone

But, although UCLA still lists Samueli as a faculty member on a leave of absence, he is not sure that he will ever go back to academia.

“Life in industry is simply too exciting,” he said. “At a university, you are on a treadmill. You bring in a graduate student, give him a research project, he spends three or four or five years on it, then he graduates. All that knowledge he accumulated leaves with him, and you get a fresh student who has to come up the learning curve from the bottom. You spend a lot of time repeating yourself. There is some institutional memory, but every time you have one of your students graduate, you lose a lot, even though industry and society gain from the talent you have created.

“On the other hand, at our company, people don’t leave. They can in theory, but in our eight-year history, we’ve only lost four engineers out of more than 400. So you are not going through a reset every few years. You are on a continuous ramp of knowledge accumulation, and that is a huge benefit. You also have a lot more resources at your disposal: software, computers, chip fabrication.”

Yet another benefit, Samueli told Spectrum, “is the focus on real products. This creates good limits. You don’t do something unless there is a real application for it. You get closure, completion, and success, and that is rewarding in and of itself.

“The success of Broadcom has brought me enormous happiness in many respects; the most exciting to me is the ability to create such extensive success and happiness for so many people. At the university, I was successful, but it was on a much smaller scale. Here, some 400 engineers have become very successful, financially as well as professionally.”

Alexopoulos, of the University of California at Irvine, confirms that, while at heart Samueli is an academic, “he is also a doer. He wants to see that his work has significant and global impact, not only in providing technology for improving society, but also in creating meaningful and challenging employment for engineers and nonengineers alike.”

Although much of Samueli’s success came from his independent technical achievements, as a manager, he is a people person. Observed at a recent meeting of his laboratory heads and other key staff members, Samueli sat quietly when technical problems were discussed, but quickly jumped in during discussions about new hires, potential engineering recruits, and other human resources issues. He was a little surprised when this was pointed out to him, then said: “I think recruiting is of paramount importance to the success of most high-tech companies. I have confidence that technical issues can be solved by the talented people we have at the company, but due to my networking in the research community, one of my key roles is in identifying the best people.”

The ‘nucleus of the black hole’

What often draws people to the company are Samueli’s technical credentials and reputation for sharing the credit. Said Broadcom’s Tsubota: “He is the nucleus of the black hole—an irresistible force,” attracting talent to Broadcom out of professorships, secure jobs, and corporate fellow positions.

And he has a good memory for people’s strengths and weaknesses. Anne Cole, today’s cable business unit controller and engineering controller who was Broadcom’s second employee, told Spectrum that when she interviewed at Broadcom, several years after taking an introductory engineering class from Samueli, he surprised her by confronting her with her academic record. “You turned in all your homework and you blew the final,” he told her. He ended up hiring her as an office manager (she had since earned an MBA), not an engineer.

He also sees helping his staff logistically as a key role, and, in that, he may be the engineer’s dream boss. At the previously mentioned meeting, the company’s information systems director presented a problem: Engineers were facing sometimes extensive delays in running computing jobs on the company’s large servers—in part because other engineers were using those same servers to run simple tasks that could be easily run from a desktop workstation. Eliminating the delays would require changes in computer utilization or the purchase of US $650,000 worth of additional servers.

Another manager might have responded by creating an official policy listing what jobs could and could not be run on the company’s shared servers, burdening his engineers with bureaucracy. Samueli barely hesitated. “From an engineering perspective,” he said, “buy the machines.”

But perhaps his most important attribute as a manager is his niceness. People at Broadcom often work until two in the morning. Samueli says it is because they are competitive and want their products to win in the market place. But another motive seems to come into play. The Broadcom employees seem to want to make Samueli happy. Besides being the technical center of the company, Samueli is viewed as the moral center, Tsubota said.

“The engineers here don’t want to disappoint him,” controller Cole told Spectrum. “They want to meet his expectations—and he has very high expectations.” Said one employee, “When you don’t come through for Henry [Samueli], it hurts a lot more than when Nick [CEO Nicholas] yells at you.”

This article appeared in the September 1999 print issue.

From Your Site Articles

Related Articles Around the Web

One of the authors (Ghodssi) holds a miniaturized

One of the authors (Ghodssi) holds a miniaturized  A high-speed video shows how a capsule deploys microneedles to deliver drugs into intestinal tissue.

A high-speed video shows how a capsule deploys microneedles to deliver drugs into intestinal tissue.

Microneedle designs for drug-delivery capsules have evolved over the years. An early prototype [top] used microneedle anchors to hold a capsule in place. Later designs adopted molded microneedle arrays [center] for more uniform fabrication. The most recent version [bottom] integrates hollow microinjector needles, allowing more precise and controllable

Microneedle designs for drug-delivery capsules have evolved over the years. An early prototype [top] used microneedle anchors to hold a capsule in place. Later designs adopted molded microneedle arrays [center] for more uniform fabrication. The most recent version [bottom] integrates hollow microinjector needles, allowing more precise and controllable  Embedded sensors can probe the gut—for example, measuring the bioimpedance of the intestinal lining to detect disease—and transmit the data wirelessly.All illustrations: Chris Philpot

Embedded sensors can probe the gut—for example, measuring the bioimpedance of the intestinal lining to detect disease—and transmit the data wirelessly.All illustrations: Chris Philpot Miniature actuators can trigger drug release at specific sites in the gut, boosting effectiveness while limiting side effects.

Miniature actuators can trigger drug release at specific sites in the gut, boosting effectiveness while limiting side effects. A spring-loaded mechanism can collect a tiny biopsy sample from the gut wall and store it during the capsule’s passage through the digestive system.

A spring-loaded mechanism can collect a tiny biopsy sample from the gut wall and store it during the capsule’s passage through the digestive system. A microbe-powered biobattery designed for ingestible devices dissolves in water within an hour. Seokheun Choi/Binghamton University

A microbe-powered biobattery designed for ingestible devices dissolves in water within an hour. Seokheun Choi/Binghamton University

Sheffield’s Centre for Research into Electrical Energy Storage and Applications (CREESA) operates one of the UK’s only research-led, grid-connected, multi-megawatt battery energy storage testbeds. The University of Sheffield

Sheffield’s Centre for Research into Electrical Energy Storage and Applications (CREESA) operates one of the UK’s only research-led, grid-connected, multi-megawatt battery energy storage testbeds. The University of Sheffield Dan Gladwin, Professor of Electrical and Control Systems Engineering, leads Sheffield’s research into grid-connected energy storage.The University of Sheffield

Dan Gladwin, Professor of Electrical and Control Systems Engineering, leads Sheffield’s research into grid-connected energy storage.The University of Sheffield Sheffield’s Centre for Research into Electrical Energy Storage and Applications (CREESA) enables researchers to test storage technologies not just in simulation or controlled cycling rigs, but under full-scale, live grid conditions.The University of Sheffield

Sheffield’s Centre for Research into Electrical Energy Storage and Applications (CREESA) enables researchers to test storage technologies not just in simulation or controlled cycling rigs, but under full-scale, live grid conditions.The University of Sheffield One of Sheffield’s earliest breakthroughs came with the installation of a 2 MW / 1 MWh lithium titanate demonstrator. Professor Gladwin led the engineering, design, installation, and commissioning of the system, establishing one of UK’s first independent megawatt scale storage platforms.The University of Sheffield

One of Sheffield’s earliest breakthroughs came with the installation of a 2 MW / 1 MWh lithium titanate demonstrator. Professor Gladwin led the engineering, design, installation, and commissioning of the system, establishing one of UK’s first independent megawatt scale storage platforms.The University of Sheffield

Ruslan Nagimov, the principal infrastructure engineer for Cloud Operations and Innovation at Microsoft, stands near the world’s first HTS-powered rack prototype.Microsoft

Ruslan Nagimov, the principal infrastructure engineer for Cloud Operations and Innovation at Microsoft, stands near the world’s first HTS-powered rack prototype.Microsoft

Carl Anderson [top] sits beside the magnet cloud chamber he used to discover the positron. His cloud-chamber photograph [bottom] from 1932 shows the curved track of a positron, the first known antimatter particle.

Carl Anderson [top] sits beside the magnet cloud chamber he used to discover the positron. His cloud-chamber photograph [bottom] from 1932 shows the curved track of a positron, the first known antimatter particle.

The Standard Model catalogs the known fundamental particles of matter and the forces that govern them, but leaves major mysteries unresolved.

The Standard Model catalogs the known fundamental particles of matter and the forces that govern them, but leaves major mysteries unresolved.

An image from a single collision at the LHC shows an unusually complex spray of particles, flagged as anomalous by machine learning algorithms.

CERN

An image from a single collision at the LHC shows an unusually complex spray of particles, flagged as anomalous by machine learning algorithms.

CERN

Figure 1. Shift-left and concurrent build of IC chips performs multiple tasks simultaneously that use to be done sequentially.

Figure 1. Shift-left and concurrent build of IC chips performs multiple tasks simultaneously that use to be done sequentially. Priyank Jain leads product management for Calibre Interfaces at Siemens EDA.Siemens

Priyank Jain leads product management for Calibre Interfaces at Siemens EDA.Siemens Figure 2. The Calibre Vision AI software automates and simplifies the chip-level DRC verification process.Siemens

Figure 2. The Calibre Vision AI software automates and simplifies the chip-level DRC verification process.Siemens Figure 3. Charting the results load time between the traditional DRC debug flow and the Calibre Vision AI flow.Siemens

Figure 3. Charting the results load time between the traditional DRC debug flow and the Calibre Vision AI flow.Siemens From 2008 to 2025, Nigeria has experienced extraordinary growth in both the number of undersea high-speed cables landing on its shores and the buildout of broadband networks, especially in its cities. Still, fixed-line broadband is unaffordable for most Nigerians, and about half of the population has no access.

From 2008 to 2025, Nigeria has experienced extraordinary growth in both the number of undersea high-speed cables landing on its shores and the buildout of broadband networks, especially in its cities. Still, fixed-line broadband is unaffordable for most Nigerians, and about half of the population has no access.  A Phase3 Telecom worker [left] installs fiber-optic cables on power poles in Abuja, Nigeria. Abdullateef Aliyu [right], Phase3’s general manager for projects, says the country is using only around 25 percent of the capacity of its undersea cables.Andrew Esiebo

A Phase3 Telecom worker [left] installs fiber-optic cables on power poles in Abuja, Nigeria. Abdullateef Aliyu [right], Phase3’s general manager for projects, says the country is using only around 25 percent of the capacity of its undersea cables.Andrew Esiebo Computer science student Emmanuella Njoku has found a broadband-enabled side gig: creating YouTube videos.Andrew Esiebo

Computer science student Emmanuella Njoku has found a broadband-enabled side gig: creating YouTube videos.Andrew Esiebo Computer Village in Lagos is Nigeria’s main hub for electronics.Andrew Esiebo

Computer Village in Lagos is Nigeria’s main hub for electronics.Andrew Esiebo NTEL’s chief information officer, Anthony Adegbola, inspects broadband equipment at the company’s data center in Lagos, which still houses obsolete coaxial cable boxes [top]. Andrew Esiebo

NTEL’s chief information officer, Anthony Adegbola, inspects broadband equipment at the company’s data center in Lagos, which still houses obsolete coaxial cable boxes [top]. Andrew Esiebo In Lagos’s Computer Village, you can buy or sell a mobile phone or computer, or get yours repaired.Andrew Esiebo

In Lagos’s Computer Village, you can buy or sell a mobile phone or computer, or get yours repaired.Andrew Esiebo Fiber-optic cable spills from an open manhole in Lagos. Local gangs may cut the cables or steal components. Andrew Esiebo

Fiber-optic cable spills from an open manhole in Lagos. Local gangs may cut the cables or steal components. Andrew Esiebo In Tungan Ashere, the Internet hub operated by the Centre for Information Technology and Development attracts residents.Andrew Esiebo

In Tungan Ashere, the Internet hub operated by the Centre for Information Technology and Development attracts residents.Andrew Esiebo Usman Isah Dandari [standing] coordinates several projects like the one in Tungan Ashere, to provide affordable broadband access.Andrew Esiebo

Usman Isah Dandari [standing] coordinates several projects like the one in Tungan Ashere, to provide affordable broadband access.Andrew Esiebo Funke Opeke founded MainOne to build Nigeria’s first private undersea fiber-optic cable.George Osodi/Bloomberg/Getty Images

Funke Opeke founded MainOne to build Nigeria’s first private undersea fiber-optic cable.George Osodi/Bloomberg/Getty Images

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Henry Samueli is this year’s recipient of the

Henry Samueli is this year’s recipient of the  On a cruise to

On a cruise to  Henry Samueli and his wife, Susan, celebrate the Stanley Cup victory for the Anaheim Ducks

Henry Samueli and his wife, Susan, celebrate the Stanley Cup victory for the Anaheim Ducks

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Broadcom cofounders Henry Samueli [left] and Henry Nicholas pose in front of the company’s headquarters in Irvine, Calif., in 1999. Ted Soqui/Corbis/Getty Images

Source image:

Source image:  Oliver Killig/HZDR

Oliver Killig/HZDR  Seyed Reza Sandoghchi and Ghafour Amouzad Mahdiraji/Microsoft

Seyed Reza Sandoghchi and Ghafour Amouzad Mahdiraji/Microsoft  Taara

Taara  Elisa McGhee

Elisa McGhee  Mirko Pittaluga, Yuen San Lo, et al.

Mirko Pittaluga, Yuen San Lo, et al.  Christoph Burgstedt/Science Photo Library/Alamy

Christoph Burgstedt/Science Photo Library/Alamy  Davide Comai

Davide Comai

Researchers from Kyocera tested a high-speed, underwater optical

Researchers from Kyocera tested a high-speed, underwater optical  NATO’s HEIST project is now investigating ways to protect member countries’ undersea Internet lines, including these 22 Atlantic cable paths, by quickly detecting cable damage and rerouting data to

NATO’s HEIST project is now investigating ways to protect member countries’ undersea Internet lines, including these 22 Atlantic cable paths, by quickly detecting cable damage and rerouting data to

The startup Vector Atomic uses a vapor of

The startup Vector Atomic uses a vapor of  Inside the briefcase-size optical atomic clock. A laser (1) shines into a glass cell containing atomic vapor (2). The atoms absorb light at only a very precise frequency. A detector (3) measures the amount of absorption and uses that to stabilize the laser at the correct frequency. A

Inside the briefcase-size optical atomic clock. A laser (1) shines into a glass cell containing atomic vapor (2). The atoms absorb light at only a very precise frequency. A detector (3) measures the amount of absorption and uses that to stabilize the laser at the correct frequency. A  Vector Atomic, QuantX Labs, and Infleqtion all have plans to send prototypes of their clocks into space. QuantX Labs has designed a 20-liter engineering model of their space clock [left]. QuantX Labs

Vector Atomic, QuantX Labs, and Infleqtion all have plans to send prototypes of their clocks into space. QuantX Labs has designed a 20-liter engineering model of their space clock [left]. QuantX Labs

Vector Atomic and QuantX affiliate University of Adelaide installed their optical atomic clocks on a ship [top] to test their robustness in a harsh environment. The performance of Vector Atomic’s clocks [bottom] remained basically unchanged despite the ship’s rocking, temperature swings, and water sprays. The University of Adelaide’s clock degraded somewhat, but the team used the trial to improve their design. Will Lunden

Vector Atomic and QuantX affiliate University of Adelaide installed their optical atomic clocks on a ship [top] to test their robustness in a harsh environment. The performance of Vector Atomic’s clocks [bottom] remained basically unchanged despite the ship’s rocking, temperature swings, and water sprays. The University of Adelaide’s clock degraded somewhat, but the team used the trial to improve their design. Will Lunden

During the sea trials, Vector Atomic’s and the University of Adelaide’s clocks were exposed to the elements. Jon Roslund

During the sea trials, Vector Atomic’s and the University of Adelaide’s clocks were exposed to the elements. Jon Roslund

The global timing infrastructure. A collection of precise clocks, including hydrogen masers and atomic clocks, is used to create Coordinated Universal Time (UTC). A network of satellites carries atomic clocks of their own, synced to UTC on a regular basis. The satellites then send precise timing to data centers, financial institutions, the

The global timing infrastructure. A collection of precise clocks, including hydrogen masers and atomic clocks, is used to create Coordinated Universal Time (UTC). A network of satellites carries atomic clocks of their own, synced to UTC on a regular basis. The satellites then send precise timing to data centers, financial institutions, the  Ted Rappaport

Ted Rappaport

Tom Marzetta

Photo: NYU Wireless

Tom Marzetta

Photo: NYU Wireless

Ted Rappaport

Ted Rappaport

Tom Marzetta

Photo: NYU Wireless

Tom Marzetta

Photo: NYU Wireless

Tom Marzetta with an array

Photo: NYU Wireless

Tom Marzetta with an array

Photo: NYU Wireless

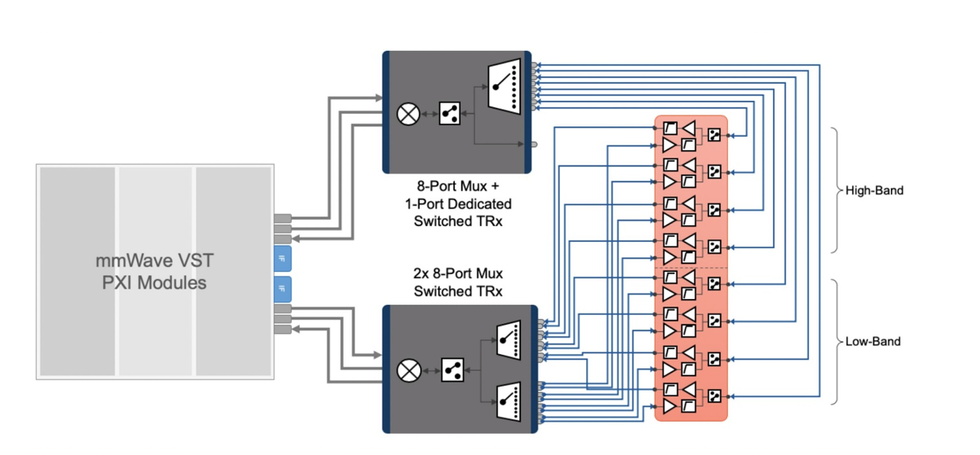

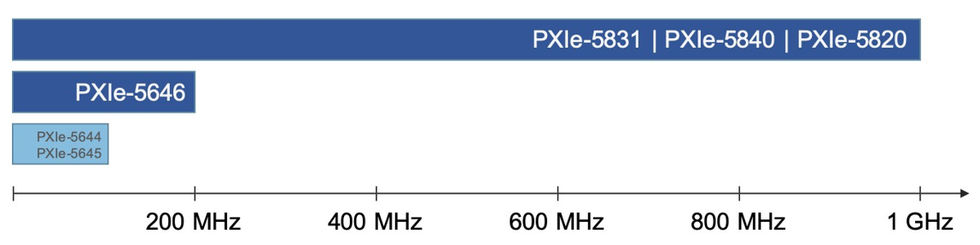

Figure 2. mmWave radio heads with integrated switching.

Figure 2. mmWave radio heads with integrated switching.

Figure 3. Example test configuration mapping with mmWave radio heads.

Figure 3. Example test configuration mapping with mmWave radio heads.

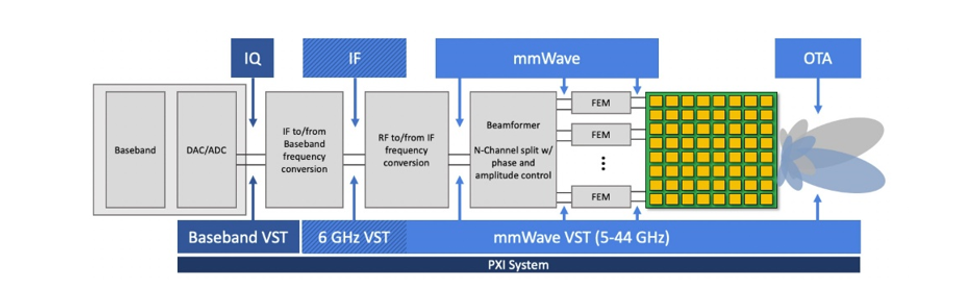

Figure 4. Multiband coverage with IF and mmWave test ports.

Figure 4. Multiband coverage with IF and mmWave test ports.

Figure 5. mmWave VST features bidirectional test ports for both intermediate and mmWave frequencies.

Figure 5. mmWave VST features bidirectional test ports for both intermediate and mmWave frequencies.

Figure 6. The mmWave VST offers 1 GHz of instantaneous RF bandwidth with excellent measurement accuracy.

Figure 6. The mmWave VST offers 1 GHz of instantaneous RF bandwidth with excellent measurement accuracy.

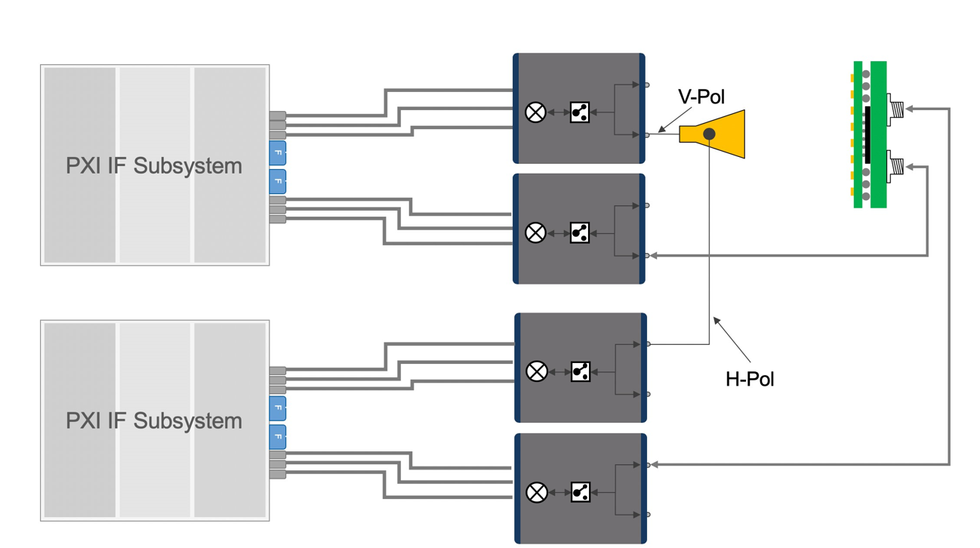

Figure 7. Dual polarization antenna over-the-air test.

Figure 7. Dual polarization antenna over-the-air test.

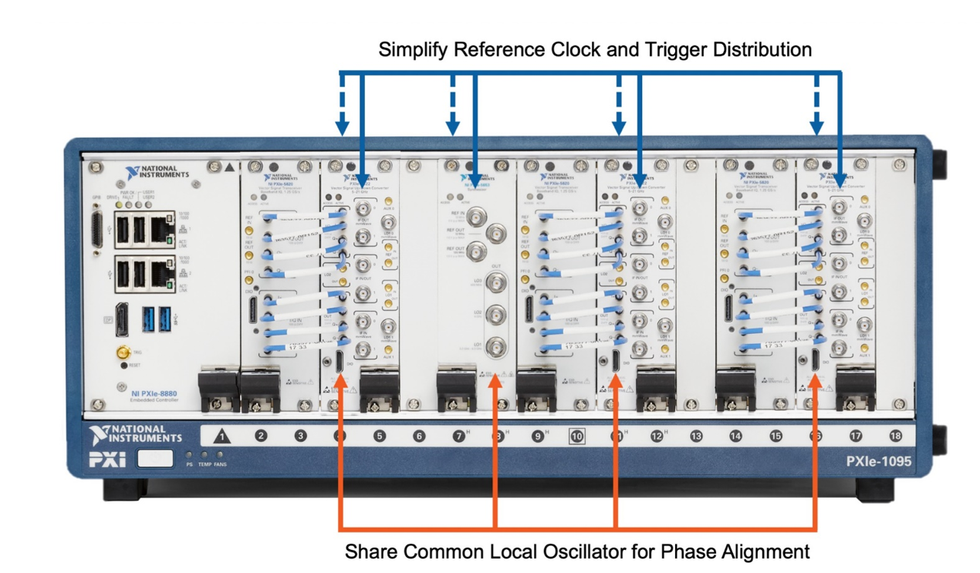

Figure 8. Engineers can synchronize PXIe-5831s in a single 18-slot PXI chassis.

Figure 8. Engineers can synchronize PXIe-5831s in a single 18-slot PXI chassis.

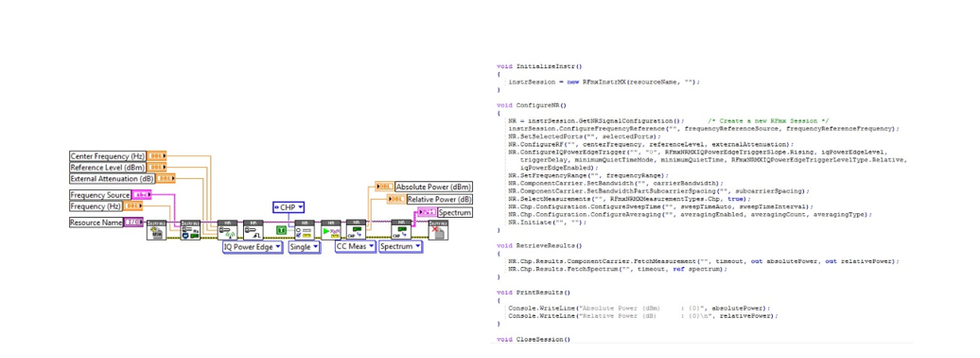

Figure 9. Multi-instrument integration with the PXI platform.

Figure 9. Multi-instrument integration with the PXI platform.

Figure 10. 5G NR measurements performed using NI RFmx in

Figure 10. 5G NR measurements performed using NI RFmx in  Figure 11. The VST combines a high-bandwidth vector signal generator, vector signal analyzer, high-speed digital interface, and a user-programmable FPGA onto a single PXI instrument. The mmWave VST (PXIe-5831) extends the VST architecture with innovations focused on addressing the increasing complexity—and uncertainty—of wireless standards, protocols, and technologies.

Figure 11. The VST combines a high-bandwidth vector signal generator, vector signal analyzer, high-speed digital interface, and a user-programmable FPGA onto a single PXI instrument. The mmWave VST (PXIe-5831) extends the VST architecture with innovations focused on addressing the increasing complexity—and uncertainty—of wireless standards, protocols, and technologies.